Summary

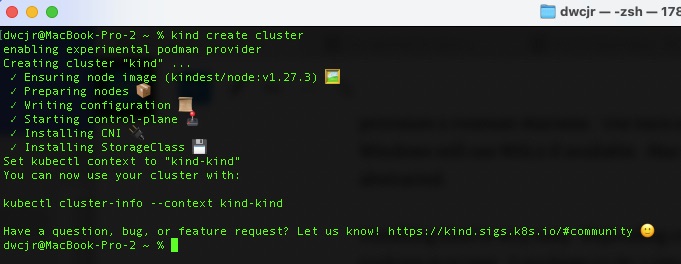

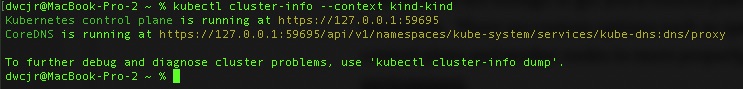

The need for this mix of buzz words is for a very specific use case. For all of my production hosting I use Google Cloud. For my local environment its podman+kind provisioned by Terraform.

Usually to load container images I will build them locally and push into kind. I do this to alleviate the requirement of an internet connection to do my work. But it got my thinking, if I wanted to, couldn’t I just pull from my us.gcr.io private repository?

Sure – I could load a static key in place but I’d likely forget and that could be an attack vector for compromise. I decided to play with Vault to see if I could accomplish this. Spoiler, you can but there aren’t great instructions for it!

Why Vault?

There are a great many articles on why Vault or a secret manager is a great idea. What it comes down to is minimizing the time a credential is valid and to do that using more short lived credentials so if it gets compromised, the longevity of that compromise will be minimized.

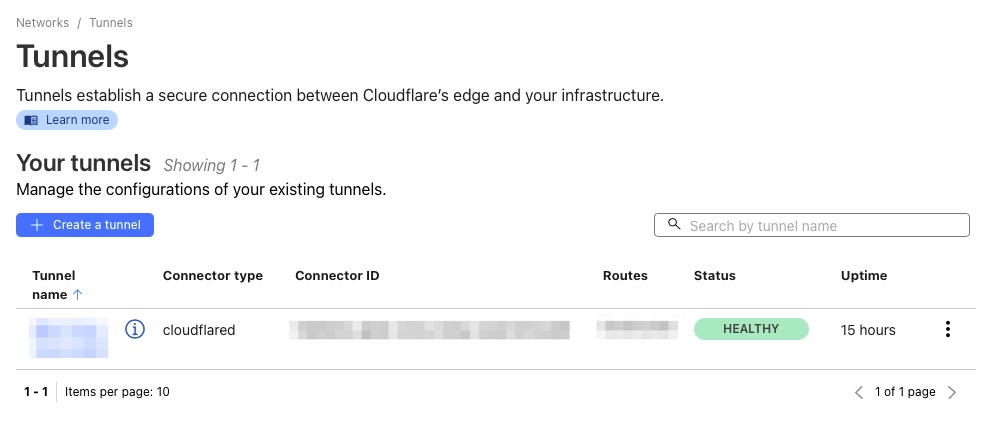

Vault Setup

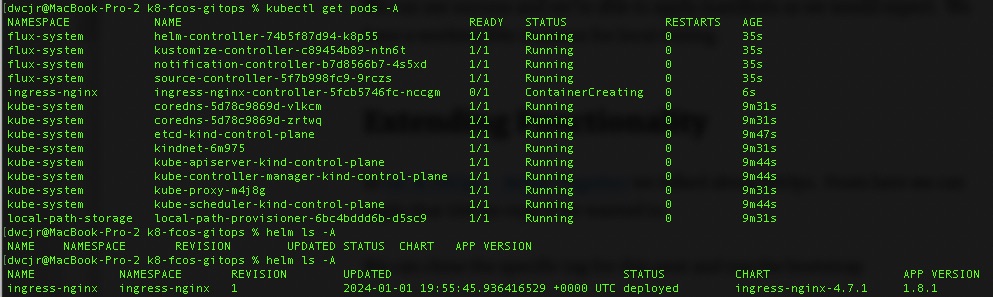

I will not go into full details on the setup but Vault was deployed via helm chart into the K8s cluster and using this guide from HashiCorp to enable gcp secrets

Your gcpbindings.hcl will need to look something like this at a minimum. You likely don’t need the roles/viewer.

resource "//cloudresourcemanager.googleapis.com/projects/woohoo-blog-2414" {

roles = ["roles/viewer", "roles/artifactregistry.reader"]

}For the roleset, I called mine “app-token” which you will see later.

The values I used for vault’s helm chart were simply as follows because I don’t need the injector and I don’t think it would even work for what we’re trying to do.

#vault values.yaml

injector:

enabled: "false"For the Vault Secret Operator it was simply these values as vault was installed in the default namespace. I did this for simplicity just to get it up and running. A lot of the steps I will share ARE NOT BEST PRACTICES but will help you get it up quickly and then be able to learn best practices. This includes disabling client caching and encryption on the storage (which is a default BUT NOT BEST PRACTICE). Ideally client caching is enabled to have near zero downtime upgrades and therefore encrypting the cache in transit and at rest.

defaultVaultConnection:

enabled: true

address: "http://vault.default.svc.cluster.local:8200"

skipTLSVerify: falseVault Operator CRDs

First we will start with a VaultConnection and Vault Auth. This is how the Operator will connect with vault.

apiVersion: secrets.hashicorp.com/v1beta1

kind: VaultConnection

metadata:

name: vault-connection

namespace: default

spec:

# required configuration

# address to the Vault server.

address: http://vault.default.svc.cluster.local:8200

---

apiVersion: secrets.hashicorp.com/v1beta1

kind: VaultAuth

metadata:

name: static-auth

namespace: default

spec:

vaultConnectionRef: vault-connection

method: kubernetes

mount: kubernetes

kubernetes:

role: test

serviceAccount: defaultThe test role attaches to a policy called test policy that looks like this

path "gcp/roleset/*" {

capabilities = ["read"]

}This allows us to read the “gcp/roleset/app-token/token” path. Above should likely be more specific such as “gcp/roleset/app-token/+” to lock it down to specific tokens wanting to be read.

All of this to get us to the VaultStaticSecret CRD.

apiVersion: secrets.hashicorp.com/v1beta1

kind: VaultStaticSecret

metadata:

annotations:

imageRepository: us.gcr.io

name: vso-gcr-imagepullref

spec:

# This is important, otherwise it will try to pull from gcp/data/roleset

type: kv-v1

# mount path

mount: gcp

# path of the secret

path: roleset/app-token/token

# dest k8s secret

destination:

name: gcr-imagepullref

create: true

type: kubernetes.io/dockerconfigjson

#type: Opaque

transformation:

excludeRaw: true

excludes:

- .*

templates:

".dockerconfigjson":

text: |

{{- $hostname := .Annotations.imageRepository -}}

{{- $token := .Secrets.token -}}

{{- $login := printf "oauth2accesstoken:%s" $token | b64enc -}}

{{- $auth := dict "auth" $login -}}

{{- dict "auths" (dict $hostname $auth) | mustToJson -}}

# static secret refresh interval

refreshAfter: 30s

# Name of the CRD to authenticate to Vault

vaultAuthRef: static-authThe bulk of this is in the transformation.templates section. This is the magic. We can easily pull the token but its not in a format that Kubernetes would understand and use. Most of the template is to format correctly the mirror the dockerconfigjson format.

To make it more clear, we use an annotation to store the repository hostname.

Incase the template text is a little confusing, a more readable version of this template text section would be as follows.

{{- $hostname := "us.gcr.io" -}}

{{- $token := .Secrets.token -}}

{{- $login := printf "oauth2accesstoken:%s" $token | b64enc -}}

{

"auths": {

"{{ $hostname}}": {

"auth": "{{ $login }}"

}

}

}Apply the manifest and if all went well you should have a secret named “gcr-imagepullref” which you can use in your “imagePullSecrets” section of the manifest.

In Closing

In closing, we leveraged gcp secrets engine and kubernetes auth to attain time limited OAuth tokens and inject into a secret to use for pulling images from a private repository. There are a number of times you may want to do something like this such as when you’re multicloud but want to utilize one repository or have on-premise clusters but want to use your cloud repository. Instead of just pulling a long lived key, this will be more secure and minimize attack vector.

Following some of the best practices will also help that as well such as limiting the scope of roles and ACLs and enabling encryption on the storage and transmission of the data.

For more on the transformation templating, you can go here.